Contactless Ocular-State Interface

"Contactless Ocular-State Interface uses EEG-assisted training and infrared eye tracking to infer selected cognitive and motor-intention states from pupil oscillation and gaze dynamics without real-time electrodes."

Contactless Ocular-State Interface is an experimental neurotechnology project that explores a fundamentally different pathway for brain-computer interaction. Instead of relying on implanted electrodes, scalp EEG electrodes, or other direct neural recording hardware during real-time use, this system attempts to infer latent brain states from high-resolution ocular signals, including eye movement, gaze dynamics, pupil diameter variation, and micro-scale pupil oscillation.

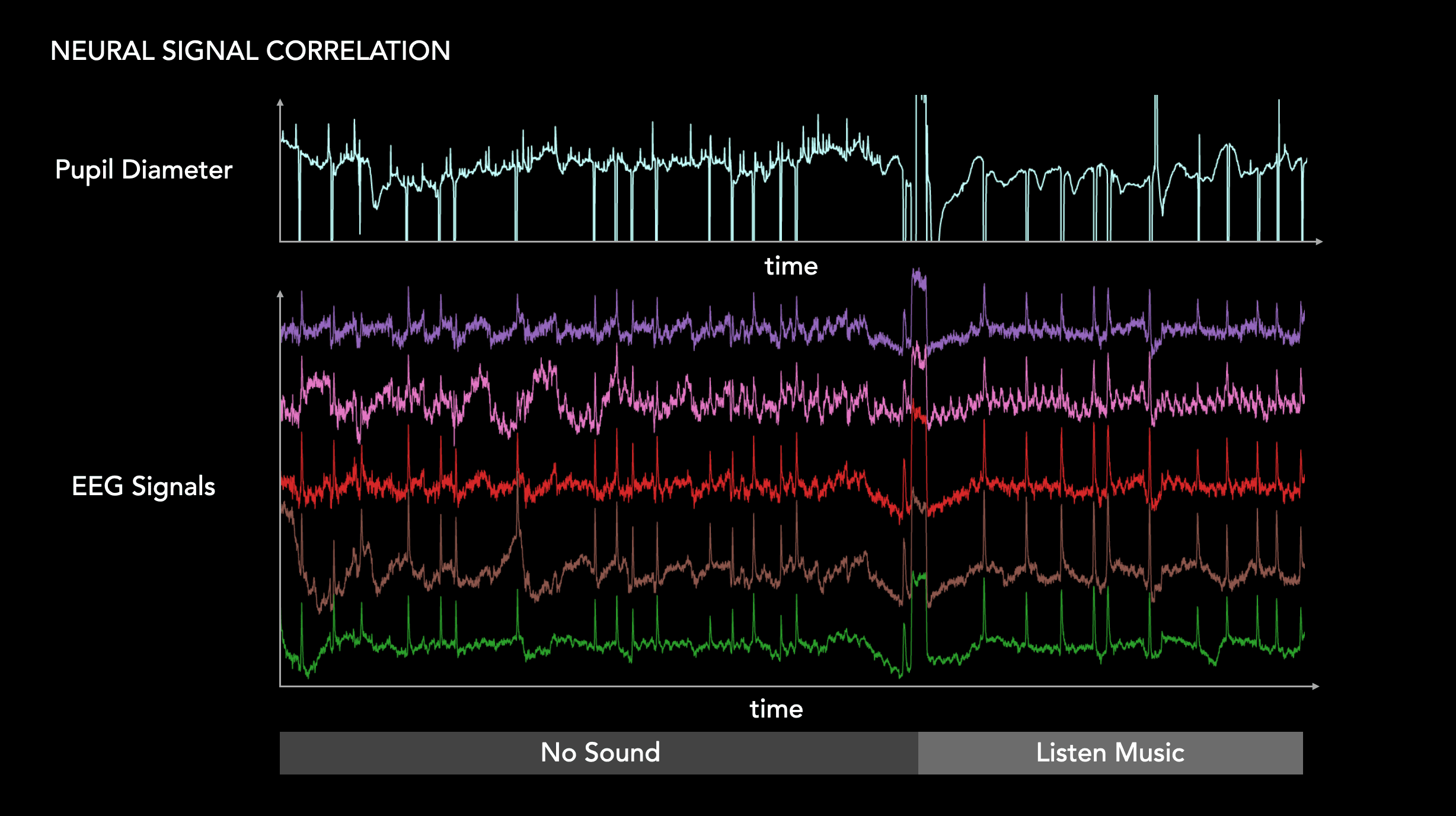

The core hypothesis of the project is that the eye is not only an optical organ, but also an externally observable interface to internal neural state. In particular, the project investigates a phenomenon referred to here as pupil oscillation: repeated, multi-scale fluctuations of the biological pupil that occur across different temporal windows and appear to correlate with arousal, attention, intention, motor preparation, and other brain-state-dependent processes. In neuroscience, spontaneous pupil fluctuations, pupillary unrest, and hippus have long been studied as physiological signals related to arousal and autonomic regulation, while more recent work has shown that pupil dynamics can track cortical state transitions and neuromodulatory activity.

This project builds on that scientific foundation and extends it into a contactless interaction system. During the training phase, a custom-built BCI EEG device and an infrared eye-tracking camera are used simultaneously to collect synchronized brain-wave and ocular data. Participants perform controlled tasks designed to evoke different cognitive, sensory, and motor states, including video-based stimulation, mouse movement, hand-raising tasks, left-right motor intention, and attention-based selection. The synchronized EEG signal serves as a reference channel during dataset construction, while the infrared camera captures the eye and pupil behavior associated with each task.

After collecting a large multimodal dataset, a custom AI model is trained to learn the relationship between EEG-derived brain-state labels and ocular features. The final goal is to remove the EEG device from the real-time interaction loop. Once calibrated and trained, the system operates using only an infrared eye-tracking camera, allowing brain-state inference through contactless optical sensing.

In this sense, the project reframes the BCI problem. Traditional BCI systems usually attempt to decode intention directly from neural activity. This project instead investigates whether the eye can function as a measurable external proxy for internal neural dynamics. The result is not mind reading in a literal sense, but a probabilistic inference system that learns how certain ocular micro-patterns correspond to cognitive and motor-intention states.

Scientific Background

Brain-computer interfaces are typically defined as systems that acquire, analyze, and translate brain signals into commands for external devices. Conventional approaches include invasive implants, partially invasive neural interfaces, and non-invasive systems such as EEG-based BCIs. These methods can provide direct access to neural signals, but they also introduce practical limitations: implanted systems require surgery, while non-invasive EEG systems usually require electrodes, careful placement, signal preparation, calibration, and ongoing management of noise and contact quality.

Pupillometry offers a different route. Pupil size is one of the few signals influenced by the brain that can be monitored with low-cost optical devices, and it has been widely used as a metric of brain state, arousal, cognition, and neuromodulatory activity. However, the interpretation of pupil size must be handled carefully: pupil dynamics are influenced by luminance, visual context, autonomic nervous system activity, attention, mental effort, emotional arousal, and multiple neuromodulatory systems. A scientifically rigorous system must therefore separate task-related neural-state information from optical artifacts, lighting effects, blinking, saccades, and head movement.

Animal studies have shown that pupil fluctuations can track rapid changes in cortical state. In mice, small fluctuations in pupil diameter have been reported to follow state transitions across multiple cortical areas during quiet wakefulness, and pupil-linked dilation has been associated with desynchronized cortical states and enhanced sensory responses. Research has also shown relationships between pupil diameter and activity in the locus coeruleus, colliculi, and cingulate cortex, suggesting that non-luminance-related pupil changes can reflect coordinated neural activity across distributed brain systems rather than a single isolated circuit.

Human studies further support the relevance of pupil-linked arousal. Experiments combining EEG and pupillometry have shown that pupil-linked arousal and cortical desynchronization can jointly influence perception and behavioral performance. Prior human-computer interface research has also demonstrated that pupillometry can be used for contactless selection and typing, including systems that decode covert attention from pupil responses without requiring electrodes.

The Contactless BCI project is positioned within this scientific landscape, but its objective is different from a standard eye tracker or a conventional pupillometry interface. It does not rely only on gaze position, dwell time, or simple pupil dilation. Instead, it attempts to model a richer set of ocular dynamics, including pupil oscillation patterns, eye movement trajectories, gaze transitions, temporal pupil features, and their correlation with EEG-derived brain-state data.

Research Hypothesis

The central hypothesis is: a sufficiently rich model of eye movement and pupil oscillation can approximate certain brain-state and intention-state signals without requiring real-time electrode-based neural recording.

This hypothesis is based on the idea that the pupil and eye movement system are coupled to multiple layers of neural regulation, including attention, arousal, motor preparation, task engagement, and sensory response. The project treats ocular signals as a high-dimensional physiological output of the nervous system. By learning the mapping between ocular microdynamics and simultaneously recorded EEG states, the system attempts to build a contactless inference model for brain-computer interaction.

This approach does not claim that all neural activity can be reconstructed from the eye. Instead, it focuses on a practical subset of brain states that are useful for interaction: selection, confirmation, attention, intention, directional movement, and state transitions.

System Architecture

The system consists of four major components.

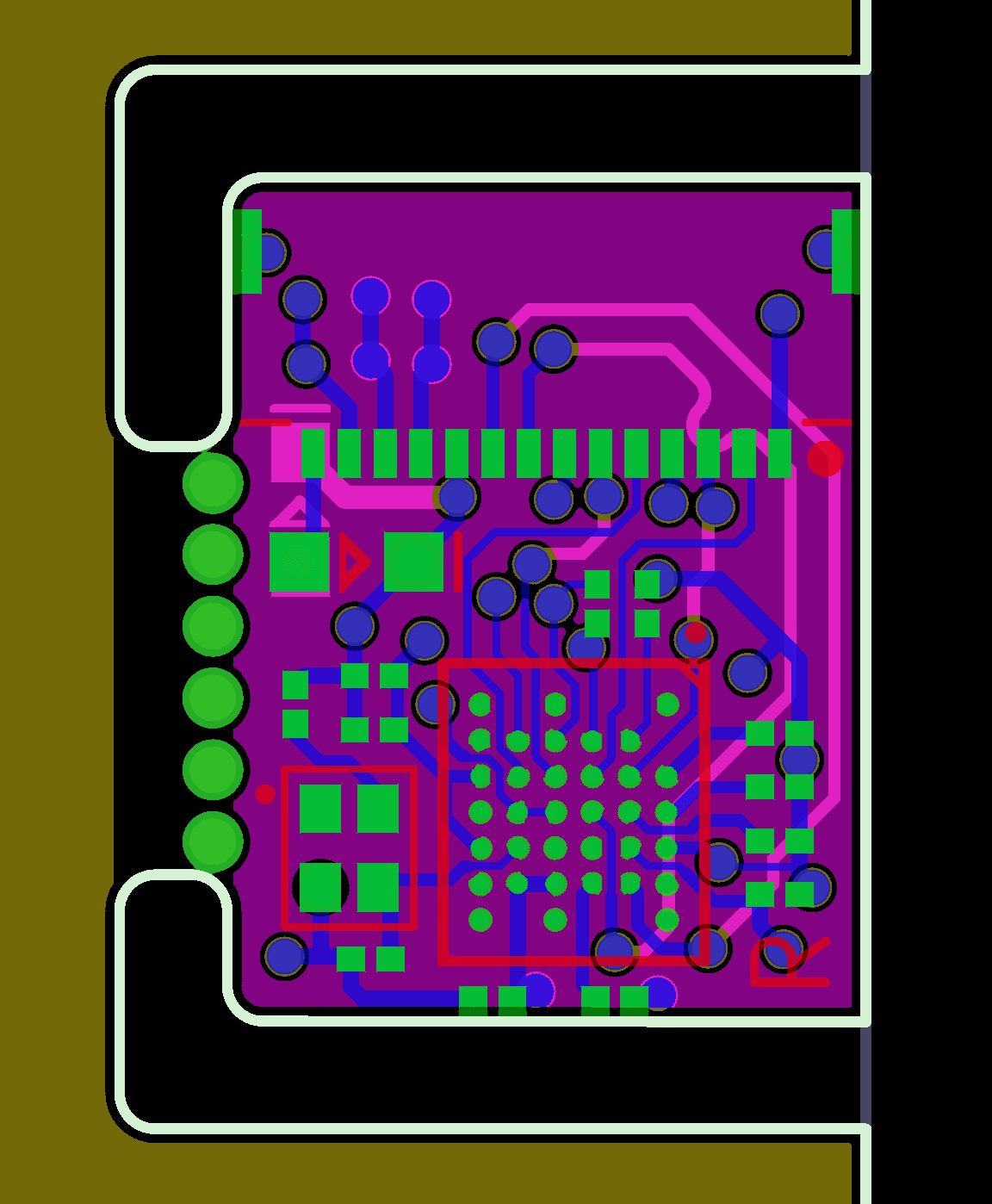

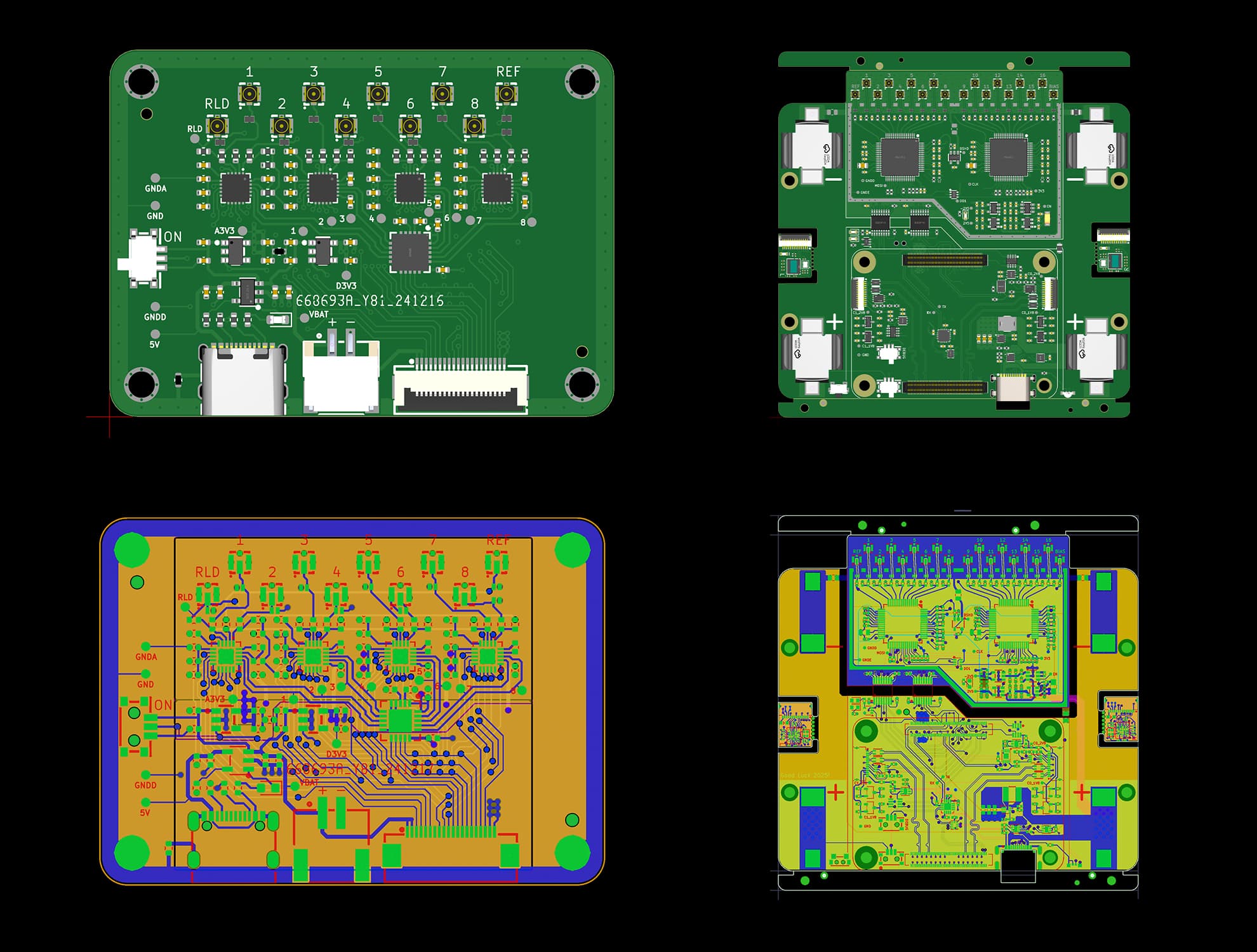

1. Custom EEG Reference Device

A self-developed BCI EEG device is used during the data acquisition and training phase. Its role is to provide synchronized neural reference data while participants perform structured cognitive and motor tasks. The EEG device is not intended to remain necessary during final real-time use. Instead, it functions as a training-stage reference instrument that helps the AI model learn the correspondence between neural activity and ocular behavior.

2. Infrared Eye-Tracking Camera

An infrared camera captures high-resolution eye and pupil data. Infrared sensing is used to improve pupil visibility and reduce dependence on visible-light imaging conditions. The camera records gaze direction, pupil size, pupil oscillation, eye movement trajectory, blink events, micro-movements, and temporal variation in ocular features.

3. Multimodal Dataset and Synchronization Pipeline

The EEG and eye-tracking streams are recorded simultaneously. Time synchronization is critical because the model must learn not only whether an ocular pattern is associated with a brain state, but also when that relationship occurs. The dataset includes multiple task categories, such as passive visual stimulation, mouse movement, left-right hand movement, hand raising, attention switching, and selection confirmation.

4. AI-Based Brain-State Inference Model

A custom AI model is trained on the synchronized EEG-ocular dataset. The model learns temporal relationships between EEG-derived states and eye-derived features. After training, the system is designed to operate with only the infrared eye-tracking camera, using ocular input to infer the user's likely cognitive or motor-intention state.

The modeling pipeline treats brain-state decoding as a probabilistic inference problem rather than a deterministic signal translation problem. This is important because pupil and eye dynamics are influenced by many overlapping physiological and environmental factors. The model therefore requires careful training, artifact rejection, subject calibration, and validation across different tasks.

Experimental Method

The experimental protocol was designed to expose participants to different cognitive, sensory, and motor conditions while collecting synchronized EEG and infrared ocular data.

Participants were asked to perform activities such as:

- Watching videos with different stimulation levels.

- Moving a mouse cursor.

- Raising the left or right hand.

- Performing left-right intention tasks.

- Maintaining attention on visual targets.

- Confirming target selection through internal intention states.

These experiments were used to build a large dataset containing both neural and ocular signatures. The EEG data provided a reference for brain-state variation, while the infrared camera captured corresponding eye movement and pupil behavior. The training process then attempted to identify repeatable patterns between these two data streams.

A key focus of the experiment was the analysis of pupil oscillation across multiple time scales. Instead of only measuring average pupil size or simple dilation, the system analyzes the temporal structure of pupil fluctuation, including oscillatory behavior, amplitude change, phase-like timing features, and state-dependent transitions. This allows the model to use the pupil as a dynamic signal rather than a static measurement.

Typing Demonstration

A functional typing demonstration was developed to test the interaction concept.

In the demo, the user controls cursor movement through eye movement. The gaze direction and eye position are used to navigate the interface and select candidate regions on the keyboard. The confirmation step is not based only on gaze dwell time; instead, the system attempts to infer the user's intention to confirm a specific letter from learned ocular and pupil-state patterns.

This creates a two-layer interaction model: first, eye movement provides spatial navigation; second, inferred intention state provides selection confirmation.

The result is a contactless typing interface that combines gaze-based control with brain-state inference. In experimental testing, the system achieved promising speed and accuracy, demonstrating the feasibility of using infrared ocular sensing as a real-time interaction channel after EEG-assisted training.

Significance

The significance of this project lies in its alternative approach to BCI design. Most BCI research focuses on improving neural signal acquisition: better electrodes, better implants, better EEG caps, or better decoding algorithms. This project asks a different question: can brain-computer interaction be achieved by modeling the external physiological traces of brain state rather than recording brain signals directly during use?

If successful, this approach could reduce several barriers that currently limit BCI accessibility. A contactless system does not require surgery, scalp electrodes, conductive gel, wearable headsets, or direct skin contact. It could potentially be deployed through compact infrared cameras, making it more comfortable, more scalable, and easier to integrate into everyday computing environments.

The project is especially relevant for:

- Assistive communication.

- Contactless human-computer interaction.

- Low-friction BCI research.

- Accessibility interfaces.

- Locked-in or motor-impaired communication systems.

- Cognitive-state-aware computing.

- Adaptive user interfaces.

- Neuroergonomics and attention monitoring.

The method also opens a broader conceptual direction: using peripheral physiological signals as indirect but learnable projections of central neural state. Rather than treating the eye as merely a visual input organ, this project treats it as a measurable output channel of the nervous system.

Hardware

Software

Scientific Caution and Interpretation

This system should not be understood as a direct replacement for invasive neural recording or high-density EEG in all contexts. Pupil and eye signals are indirect physiological measurements. They are affected by illumination, fatigue, emotional state, visual content, autonomic regulation, and individual differences. Current neuroscience also emphasizes that pupil size is not a one-to-one readout of a single neural circuit; it reflects interactions among multiple brain and autonomic systems.

For this reason, the project uses a data-driven and task-specific modeling strategy. The system is designed to infer practical interaction states, not to reconstruct arbitrary thoughts. Its strength lies in learning repeatable relationships between ocular dynamics and selected cognitive or motor-intention states under controlled conditions.

This distinction is essential. The project is not based on the claim that the eye contains a complete copy of brain activity. Instead, it proposes that certain useful brain-state signals are externally expressed through measurable ocular dynamics, and that these signals can be captured, modeled, and used for contactless interaction.

Future Outlook

Future development will focus on improving model generalization, reducing calibration time, and expanding the range of detectable intention states. A major research direction is the creation of subject-adaptive models that can learn from a small amount of personal calibration data while retaining general features learned from larger population datasets.

Another important direction is real-world robustness. Future versions of the system will need to handle variable lighting, different camera positions, head movement, fatigue, blinking, eye dryness, glasses, and individual differences in pupil response. Improved infrared optics, higher-frame-rate imaging, temporal signal processing, and multimodal validation may further improve stability.

The system could also be integrated with adaptive interfaces, accessibility tools, VR/AR environments, robotic control systems, and cognitive-state-aware software. In the long term, this project suggests a possible new class of BCI-like systems: contactless neural-state interfaces that use optical physiology and AI-based inference to support communication between humans and machines.

Key Features

- Contactless brain-state inference using infrared eye and pupil sensing.

- EEG-assisted training pipeline with final EEG-free real-time operation.

- Analysis of pupil oscillation, gaze dynamics, and eye movement features.

- Custom AI model for mapping ocular signals to latent cognitive and motor-intention states.

- Typing demo combining gaze-based cursor movement and intention-based confirmation.

- Designed as an alternative pathway to traditional electrode-based BCI systems.

- Research direction applicable to assistive communication, accessibility, and adaptive interfaces.